Last time, we discussed how Pythagorean theorem helps us in every day life. Today, I would like to invite your attention to Pi , a beautiful constant (yet one we cannot fully measure) that is in practically everything we see and use.

Image courtesy https://firstnewnan.com/casual-pi-day/

So, what is Pi ?

The common definition is that it is the ratio of the circumference of a circle to its diameter. How cool is that ? ALL circles have the same ratio of circumference to diameter – and thus all circles are similar figures. In Math we represent it as the greek letter π . There is a lot of interesting trivia to know about pi – so lets start there

Pi goes on and on

π is an irrational number ( a geeky way of saying it cannot be represented as a regular fraction like 1/2 ). It goes 3.1415926535897932384626433… with no repeating pattern emerging in its decimals. It can be approximated to 22/7 for use in daily math. Its a standard test for supercomputers to see how many digits after decimal point of pi can be computed. Practically only a few hundred are needed even for the most complex applications though.

Pi is the reason you can’t square a circle !

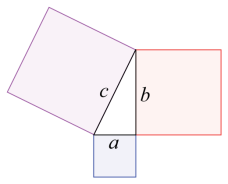

π is not just irrational – its also transcendental ( yet another geeky way of saying it cannot be expressed as the root of a polynomial ). Not all irrational numbers are transcendental – like for example the square root of 2 is irrational, but not transcendental. Its a very special class of numbers – mostly because it is very hard to prove a given number is transcendental. You may have heard of “Squaring the circle” as a way to say “trying the impossible”. It is because of pi being transcendental that you can’t create a square with the exact same area as a circle with a compass and straight edges.

Pi has Indian connections

While the origin of pi is attributed to the Greek mathematician Euclid, several Indian mathematicians like Aryabhatta, Madhava led the effort in providing proof and to calculate its value – usually based on infinite series of numbers. Its also interesting how the algorithms used to calculate pi has changed over time. It started with infinite series, then moved to iterative algorithms – and then switched back to infinite series again thanks to the use of an equation developed by the genius mathematician Srinivasa Ramanujan

OK, so now about the use of Pi in daily life

Finding the area, volume, circumference of anything that has a curve

Anything that is curved has some association to a circle in math – and circles all have pi as the ratio for their circumference to diameter. Consequently calculations for all those curved things like circles, spheres, cones, bell curves and so on ALL have pi in it.

Pi is also useful with straight lines and angles

While we commonly talk about measuring angles in degrees, math geeks measure it in radians. Quite simply a full circle is 360 degrees and in radians it is 2π radians. In other words 1 degree is π/180 radians. Thanks to pi finding its way into measurement of angles – it features in most of trigonometry as well – even though all you see is straight lines and angles between them. This extends to Calculus and other branches of math which we will explore later. Pi is the one constant that is everywhere !

Pi in the sky…and elsewhere

Every aspect of transportation uses Pi in its calculations. This one is personal for me – being a mechanical engineer by trade. Think of a plane or a car – everything from calculating surface area to wind resistance includes pi (remember all curves lead to pi !). Not only that – pi is integral to the calculation of all navigation be it in air, water or land – including finding the distance between stars and planets etc ! This is especially critical for planes that always have to fly in arcs, and where fuel consumption calculation can be a life or death issue.

Pi and the length of rivers

Prof Stolum of Cambridge University showed using Fractals that the ratio between the actual length of rivers from source to mouth and their direct length as the crow flies can be approximated to pi !

Pi is a friend of random numbers

We saw how Pi helps with curves and angles between lines. But it also shows up in seemingly unrelated domains. Here is an example – among a collection of random numbers, the probability of two numbers with no common factor is 6/π2 . If you are a math geek – you can find many other examples like this – like Cesaro’s theorem and Buffon’s needle problem where pi comes up unexpectedly.

Pi as a safety net

Whether we are designing a beam which should not break apart from vibrations ( My mind is racing back to my machine design classes) , or a video game (much more pleasant memories from creating games on BASIC) that should not crash when users do random things – the engineers factor in some “randomness” into their model to account for real life. No surprise – all those models typically include pi ! This is just a result of something we spoke above – such probability distributions typically use “area under a curve”, and that by definition needs pi